There was supposedly a time in colonial India when the British government was concerned about the number of venomous snakes in Delhi. A bounty was established by the government, with a reward offered for every dead cobra handed in to the administration. Initially the strategy seemed a great success, with a large number of snakes being killed for the reward. In time however, clever Delhi locals responded by farming cobras for income, and the government was flooded with snake skins without seeing any further decrease in the wild cobra population. When the government eventually became aware of the snake farming practice, the reward program was scrapped, and the cobra farmers responded by releasing their now-worthless snakes. As a result, the wild cobra population increased by a few orders of magnitude, making the original problem worse.

This kind of perverse outcome, where the apparent solution for a problem makes the situation worse, has become known as the Cobra Effect. The root of the problem for the colonial government is that they were dealing with a Complex Adaptive System. In these situations, it is hard to predict the outcomes of our actions, because the elements of the system interact in unexpected ways.

Complex adaptive systems can be particularly challenging when making important decisions. To help navigate these challenges, we have developed some guidance that will help in understanding the complexity of the systems you work with, and to make better decisions within them.

Complex adaptive systems

Complex adaptive systems are composed of several components which interact with each other, and where the behaviour of each component may change in response to the behaviour of others. These kinds of systems appear in many different domains and scientific fields. Typical examples include earth’s climate, the economy, the human body, natural ecosystems, traffic, the stock market, businesses and governments.

The difficulty with complex adaptive systems comes from the various relationships and dependencies between the system components. These relationships give rise to properties such as feedback loops, non-linear effects and adaptation. Sometimes a large change in one of the components does not result in much effect on the system as a whole, because the system adapts to maintain the status quo. At other times, relatively small changes can result in surprisingly large, non-linear impacts (sometimes referred to as the Butterfly Effect).

Why does this matter?

This is important, because our usual approaches to understanding and making decisions often do not work well in these environments. Standard approaches to problem solving and decision making are based on identifying the important components and how they might affect outcomes. We try to boil down the system to clear ‘cause and effect’ relationships and use this model of the system to make predictions. This approach typically involves first order, linear thinking (e.g. if I change A, the result will be B). Unfortunately, it is often not possible to simplify very complex adaptive systems using this standard approach. Each of the components of the system interact continuously and unpredictably, throwing out our predictions.

That unintended system-level consequences arise from even the best-intentioned individual-level actions has long been recognized. But the decision-making challenge remains for a couple of reasons. First, our modern world has more interconnected systems than before. So we encounter these systems with greater frequency and, most likely, with greater consequence. Second, we still attempt to cure problems in complex systems with a naïve understanding of cause and effect.

Michael J. Mauboussin, from Think Twice: Harnessing the Power of Counterintuition

Another challenge with complex systems is that individual decision makers will always struggle to see the entire system.

Most executives believe they can take in and make sense of more information than research suggests they actually can. As a result, they often act prematurely, making major decisions without fully comprehending the likely consequences for the system.

Gökçe Sargut and Rita Gunther McGrath: Learning to Live with Complexity, Harvard Business Review.

We are also poor at predicting the effects of rare events, because they don’t happen often enough to observe their impacts on the system.

These challenges mean that complex adaptive systems create difficulties for leaders of all kinds of organisations. Risk management, forecasting and decision making are all more difficult, and require new approaches in the face of complex adaptive systems.

What should we do?

While complex adaptive systems pose a significant challenge to leaders, there are some simple steps that you can take to help with making decisions in these systems.

Map the system

The first step in grappling with a complex adaptive system is to increase your understanding of it. A system map is a great tool to help understand the structure of a system, and how different components interact. There are different methods for system mapping, of varying sophistication. We opt for a simple process as follows:

- Brainstorm a list of the key components, elements, agents and actors involved in the system. Write these down, spread out in circles, on a sheet.

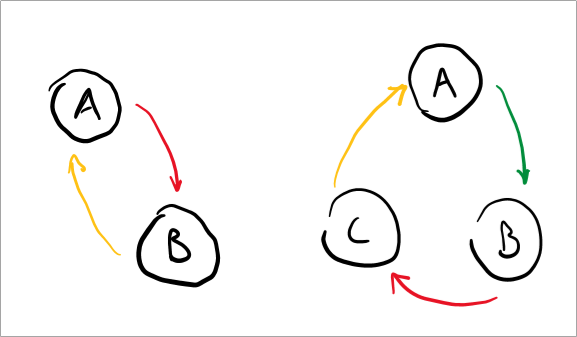

- Ask yourself, “which of these are inter-related or dependent on each other?”. Where you identify a relationship, draw an arrow between the components and note what you believe to be the strength of the relationship (weak, moderate, or strong). It can help to do this with coloured pens (e.g. green for weak, yellow for moderate, red for strong).

The process of developing the map often yields greater understanding, new insights and identification of knowledge gaps. Once developed, it also becomes a great tool for communicating and discussing the system with others.

Look out for feedback loops

Now that you’ve developed your system map, you can use it to identify feedback loops, also known as ‘coupling’. Feedback loops will appear on the map as a small number of components with a circular relationship path, something like the following examples:

Shift your decision-making approach

Within complex adaptive systems, it’s important that you adjust your decision-making process. One of the simplest ways this can be done is in the process of identifying options and alternatives – essentially the potential courses of action that you could take and need to decide between.

When generating courses of action in a complex system, use the following prompts:

- Try to limit or eliminate the need for making accurate predictions. Instead, consider small trials that allow you to test the waters before launching into a larger commitment. These small investments should give you the opportunity, but not the obligation, to make further investments later on. Ask “what small trials, experiments, tests or bets could we make, which are reversible and won’t break the bank?”

- Consider what actions in the past have delivered the desired outcome in this system. While the past behaviour of a complex system may not predict its future behaviour, the history of the system is still important to consider. Ask “what has delivered the outcomes we’re looking for in the past (in this or a very similar system)?”

Where to go next?

- Check out our book reviews

- We offer a range of tools and resources, including our free Team Alignment Canvas and the Strategic Planning Toolkit.

How can we help you?

If you’re an aspiring or established leader, we’d love to support your development.

Here are three ways:

- Subscribe to our free newsletter – we offer weekly actionable insights, expert strategies and inspiring content on leadership, management and personal development

- Connect with us on LinkedIn – we post practical advice on management and leadership every day

- Check out our range of practical tools, most of which are free to download

We’re Impact Society – join more than 20,000 aspiring and established leaders

from almost 100 countries who are changing the world, one team at a time.

Read our story